Updated: Index to all posts in this series is here!

I thought today’s post would be a good opportunity to lay out some fundamentals of automated tests work. The mechanics of test automation varies extraordinarily based on what platform you’re working on, and even within platforms you’ll see vastly differing approaches based on whatever testing framework/toolset you’re using.

Testing frameworks have a number of commonalities, regardless of platform and implementation. You’ll have a test runner which executes the tests. Test runners may live in the IDE you’re using to write your tests, they may be a separate GUI, or they may be a command line runner. Command line test runners make it possible to run your tests in a number of different environments such as a build or continuous integration server. The tests themselves will likely be organized, depending on the framework/tool, into classes, fixtures, scenarios, or something else. You generally point your test runner at these fixtures and the runner noodles out what tests are contained within those groups. The runner will execute all those tests and give you back a report on the pass/fail status.

Today’s column shows unit tests, but the general concepts apply to integration and functional tests too.

I’m going to be showing examples in C# with NUnit and RhinoMock, simply because that’s what I’m very comfy with. Yes, yes, we should all get out of our comfort zone and do things on other platforms, but the point of this series isn’t for you to watch me flail around while trying to learn new stuff…

Some things to keep in mind as you read posts of mine which contain code:

- I’m old school, although I try hard to keep up with new trends

- I haven’t written system code in a long time, just test code. There’s a bit of a difference.

- I am a starting point for your learning, not a destination. Look at my stuff, figure out what works and doesn’t, then go find other places to learn more.

The examples I’ll be using today are taken from the Unit Testing 101 talks I give. The examples aren’t *DD-ish because I want to focus on very fundamental pieces with out adding any methodology to the mix. Like I said, old school—but the foundational principles are critical and similar regardless.

Without further ado, part one, unit test basics. The system we’ll be testing is a simple payroll wage calculator method. It’s purposely not optimized or concisely written because I use it as a starting point for some refactoring, etc. (If you’ve interviewed with me for a job you’ve likely seen a variant of this…)

Here’s the method:

public float ComputeWages(float hours,

float rate,

bool isHourlyWorker)

{

if (hours < 0)

{

throw new ArgumentException("Hours must be greater or equal to zero "

+ hours);

}

float wages = 0;

if (hours > 40)

{

var overTimeHours = hours - 40;

if (isHourlyWorker)

{

wages += (overTimeHours*1.5f)*rate;

}

else

{

wages += overTimeHours*rate;

}

hours -= overTimeHours;

}

wages += hours*rate;

return wages;

}

This method figures wages for hourly and salaried workers based on their status (hourly/salaried), number of hours worked, and their hourly rate. Salaried workers don’t get overtime; hourly get time-and-a-half for anything over 40 hours. We also check for negative inputs around hours. There’ input guards we should handle, but again, this method purposely leaves off a number of things.

Let’s cover basic tests for an hourly worker first. Looking at the code we want to make sure we hit all the boundaries in this method. There’s a boundary for over/under 40 hours, so we’ll need values over and under that. I always like to specifically test zero as well, so we’ll want a value there. Finally, we’ve got a check for negative hours, so we’ll need that. Let’s just use five bucks per hour as a our rate to simplify things. Here are our input values and expected outputs.

| Hours | Rate | Expected |

| 0 | 5 | 0 |

| 40 | 5 | 200 |

| 41 | 5 | 207.50 |

| -1 | 5 | ArgumentException |

Let’s start with the simple happy path test first.

I mentioned fixtures above as a container for individual tests. In NUnit you create a class and decorate it with the TestFixture attribute. Other frameworks work in a similar fashion. Mostly.

[TestFixture]

public class When_working_with_an_hourly_worker

{...

A test fixture should contain a logical group of individual tests. Styles of grouping and naming conventions vary dramatically between advocates of particular methodologies. I’m avoiding all that discussion here and just focusing on the fact a fixture holds tests. You need to figure out what works with your team in your environment on your project.

In NUnit a test is simply a method with public scope that’s decorated with a Test attribute:

[Test]

public void Computing_with_40_hours_at_rate_5_returns_200()

{...

Now it’s time to write the actual test. Patterns for writing tests vary with methodologies, but I think there’s a common pattern regardless: Arrange, Act, and Assert. You Arrange the things you need to run the test, you Act on the system you’re testing, and you Assert to see if your actual results are what you expected.

Asserts, regardless of how they’re named in your framework/tool, are simply equality checkers of one form or another. You’re checking two strings are the same, two integers have the same value, or two collections have the same values in the same order. You’re generally asserting these conditions are equal, although you’ve likely many options such as Assert.IsFalse(someBoolean), Assert.IsNotNull(somethingElse), etc.

Here’s what our actual test will look like:

[Test]

public void Computing_with_40_hours_at_rate_5_returns_200()

{

//Arrange

WageComputer computer = new WageComputer();

bool isHourlyWorker = true;

//Act

var computeWages = computer.ComputeWages(40, 5, isHourlyWorker);

//Assert

Assert.AreEqual(200, computedWages);

}

The final statement in this test is the Assert: it’s comparing the expected value (200) to the actual value returned from the call to ComputeWages. You’ll continually hear the terms “expected” and “actual” in testing parlance.

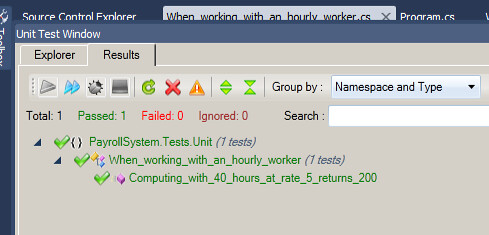

I’ll use the JustCode test runner that lives within Visual Studio to run this test. Here’s what it looks like as a passing test:

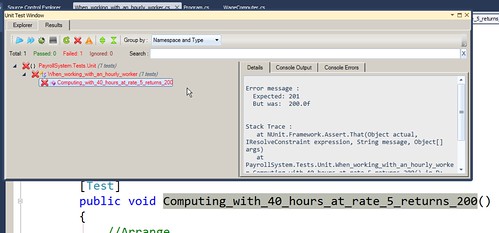

If I change the expected value in the test to an incorrect value, we’ll see what the failure looks like. Note the red colors and the detailed message on the right frame. Your test runner’s output likely varies, but the point is you get a clear failure and an exact reason why the test failed. In this case, the expected value was 201 (my manual hack) and the actual was 200.

Really, the first three test cases are variants of this: input values and an expected output value. Let’s jump to the last of the test cases, where our expected result is an ArgumentException. Every testing framework should give you the ability to assert exceptions are happening as you expect. In NUnit that’s handled simply via an attribute. Here’s how that actual test looks:

[Test]

[ExpectedException(typeof(ArgumentException))]

public void Invoking_with_negative_hours_throws_ArgException ()

{

WageComputer computer = new WageComputer();

var wages = computer.ComputeWages(-5, 5, true);

}

You’ll note we’ve got some duplication in our two tests: we’re repeating the setup of our WageComputer class. This isn’t a huge code smell for two tests, but I’d rather not see us continue this needless duplication as we grow our tests out.

Tomorrow we’ll discuss how to deal with this situation by using setup methods, and we’ll cover their corresponding teardown equivalents. We’ll also dive in to data driven tests.

4 comments:

Any reason why you didn't mention the Visual Studio testing tools? I know they don't have many of the features that other frameworks have, like mocking and so on, but they have their uses.

Thanks for a great series. Only just discovered it, but reading fast!

@Yossu: Yes, the VS testing tools have their place. I vastly prefer other test tools in the .NET space, but good tests in VS are better than no tests!

I can't cover all the different testing tools on all the platforms. I'd take hours to write posts covering everything that's neat and useful in Java, Python, Ruby, etc. I'm struggling to keep most of this series at a bit higher level to hit common topics/concepts regardless of which platform or toolset you're using.

This is really the main reason I left off VS.

I'm glad you're enjoying the series!

Don't hold me to this but I do believe that to use the Unit Testing tools w/i Visual Studio, you need the Team System Foundation version. I know when I used VS2005, Pro edition, we didn't have access to such niceties so had to resort to NUnit.

I am enjoying your posts and am looking forward to finishing out the remainder of your series. Thank you Jim!

Anonymous, not holding you to anything, but I have the Professional version of VS2010, and there are a lot of testing tools included.

Could be that TSF has even more, but Pro has enough to do a lot of good testing.

Post a Comment